Table of Contents

Speculations

During the time of crisis, many smart people try to promote themselves as predictors of future events. Unfortunately, we view that most are “speculators” rather than “smart predictors”. JP Morgan,1 provide an estimate of the peak of Malaysian Covid cases at \(\sim 6,300\) by mid-April, MIER2 estimate of the peak at around mid-April with \(\sim 5,000\) cases. In the Star online news,3 we can observe comments by various Malaysian based experts: Prof. Kamarul of USM says “the numbers of JP Morgan is too low (assuming reproduction rates of 1.7), and it should be five times higher and the peak of the outbreak may happen in June or July this year". Universiti Malaya virologist Prof Dr. Sazaly Abu Bakar commented ”that the number of positive cases was not going to “subside any time soon”, adding that JP Morgan’s prediction of 6,300 cases by mid-April was “very conservative”".

To add to this speculation frenzy is claims like: “Selangor task force for covid 19 turns to tech to contain-outbreak” by Dr. Dzulkefly Ahmad (former Health Minister)4, which give the impression that high-tech (of Big Data and Machine Learning) is the way to go.

More dire predictions come in the form of “.. the computer models project a total of 81,114 deaths in the U.S. over the next four months. Most of those deaths are expected to occur during April, peaking at more than 2,300 deaths per day. That rate is projected to drop below 10 deaths per day sometime between May 31 and June 6”.5

And a more educated prediction is by the Imperial College team6, led by Niel Ferguson, which says: “In total, in an unmitigated epidemic, we would predict approximately 510,000 deaths in GB and 2.2 million in the US, not accounting for the potential negative effects of health systems being overwhelmed on mortality.”.

We can continue with many other quotations from the news, but it only adds one thing: more speculations. Yet most sources claim that they rely on complex or sophisticated modeling exercise. What is wrong?

Why models are unreliable?

Models rely on the assumptions of having a sufficient amount of reliable data and various parameters to be estimated by the model by deploying various statistical distribution and processes. And off course, you deploy the “right” model which suited the situation best.

In the case of the Covid epidemic - all of the above assumptions are at best full of suspects. We know there are insufficient data available (the only reliable observations are daily case incidence as we progress), the statistical process and distributions are unknown (during the current stage), and we can’t be sure which model (or class of models) works best.

If we are faced with such a situation, what would be the best option? The answer is to perform models simulations and use a bootstrapping method to “uncover” possible and potential answers to various issues at hand. This implies that any “single number” prediction approach, such as “6,300 people will be infected by mid-April 2020”, is just outright wrong in the methodology of presenting model simulation results. It is just one estimate, with large variations in possible values (i.e. the variance of the estimate) and produced from one possible model out of many possible models. This is not only an irresponsible way of informing the results of modeling but also outright unethical (if the purpose is to inform the public or decision-makers).

An argument based on our experience in modeling Covid reveals a few major points of concern. As an example, when we try to estimate \(R_o\), the disease propagation ratio using any of the standard methods (log-linear, gamma distributions, etc.) produce a variance of the estimates reasonably large, which make any point estimates (such as the mean or median) to be unreliable. And to make matters worse, \(R_o\) is not a linear measure; which means that \(R_o = 4\) is not the same as twice \(R_o = 2\). The concept is akin to the Richter scale, an earthquake of Richter scale 6 is not twice as powerful as scale 3. Why? The scale of 3 is a force based on a magnitude of \(10^3\) and 6 is from a magnitude of \(10^6\). It is based on power law. Therefore, if the errors of \(R_o\) estimate are large (say between 3 to 6), the impact is far different, in the same manner as the Richter scale (\(10^3\) against \(10^6\)). For such large possible variations in the estimate (and its impact), how could we rely on them for any policy decisions?

Models are statements of probabilities

The objective of modeling is to obtain robust estimators, which purpose is to provide decision-makers tools for decision making which holds in a robust (dynamic) environment. Robustness example can be demonstrated by the following example: “we obtain estimated mean/median \(R_o\) to be 2.5, with the range of 2 to 7 based on the credible interval of 95%, and the shape of the distribution of \(R_o\) is highly skewed to the higher estimates (means more towards upper limits of 7).” The statement clearly explains (for the people who understand what \(R_o\) means) that even though the mean/median seems lower, the possibility of actual \(R_o\) number to be much higher is extremely high (and hence dangerous). The statement can be further expanded as “therefore, the estimate of the expected number of people infected after 30 days is 6,000, but the range could be between 4,000 to 15,000 people, with a higher probability of numbers to be larger than 6,000”.

Unfortunately, statements couched in model and probability terms are vague and confusing, hence they will not be understood by the laypeople. Ethically (and scientifically) however, statements must be made in such manner, instead of “point estimations” as exemplified in the news as explained before. Our view is, the better way is to provide simulations of the model(s) and allow model infographics to properly educate the public of any statements to be made.

Simulations are the way forward

What we intend to do here is to provide an example of a simulation, based on one specific cohort, namely the Tabligh. The model is based on a “random network” of specific form - namely a scale-free model of Barabasi-Albert. The model (its variation) is currently being used by the CDC (United States) to perform tracing of Covid-19. Furthermore, any form of contact tracing (such as being done in China and Korea), must deploy some forms of similar models (of a network). The model had been quite reliable (in terms of its robustness) to study SARS, H1N1 and similar outbreak in the past.7. The model that we will employ here is based on the “network theory of epidemics”.8.

The most important parameter that we want to look at is the “effective distance” of a disease to spread. The distance here is measured not in physical distance, instead, it is measured in the “number of contact events within a certain period of time” which took place (or to take place) for the disease to be spread from an originally infected person to the rest of other people.

This leads to questions like what is the “distance” for Covid to reach remote areas such as villages in Kelantan (e.g. Pasir Mas)? If there are no “superspreader events” (e.g. the Ijtima’), based on the pure random chance of people mixing normally, what is the “distance” and what if otherwise is true? Assuming that the period of spread is 30 days and probability of 50% transmission by an infected person when he/she is in contact with another (susceptible) person - what are the answers (what-if questions)? Without “spreader events” it will take about 750 “distance” (number of contact events place over 30 days period). However, with the presence of “spreader events”, the “distance” is only 6.5 times (6.5 events took place within 30 days period).9.

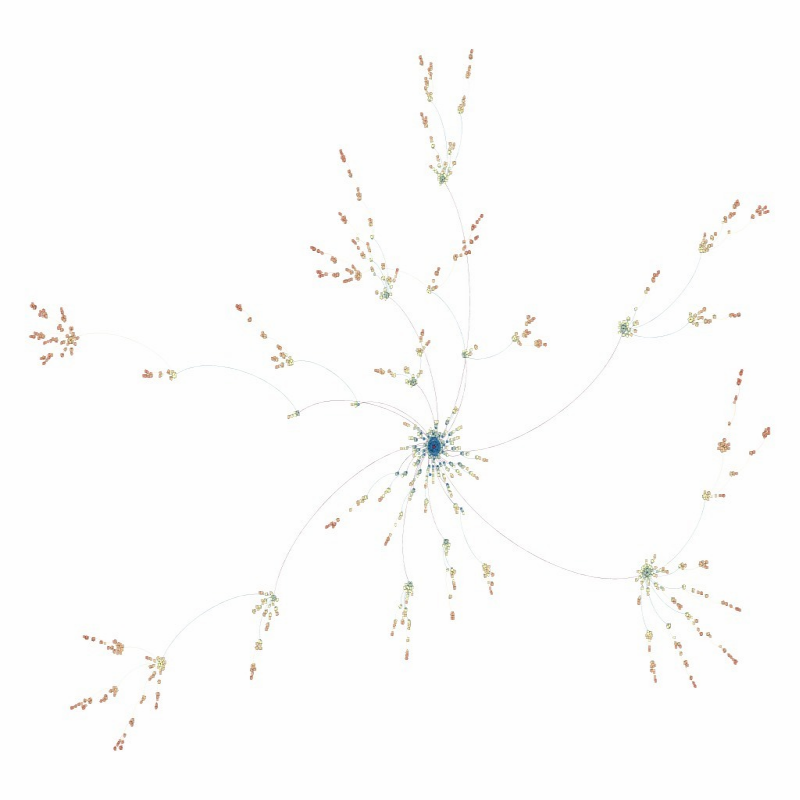

This phenomenon is described by the Figures attached below. The figure is obtained from the Barabasi-Albert network model using a power curve of 2 (i.e. \(R_o\) approximately about 2, on weekly basis; which implies that the number of infected people will be doubled each week).

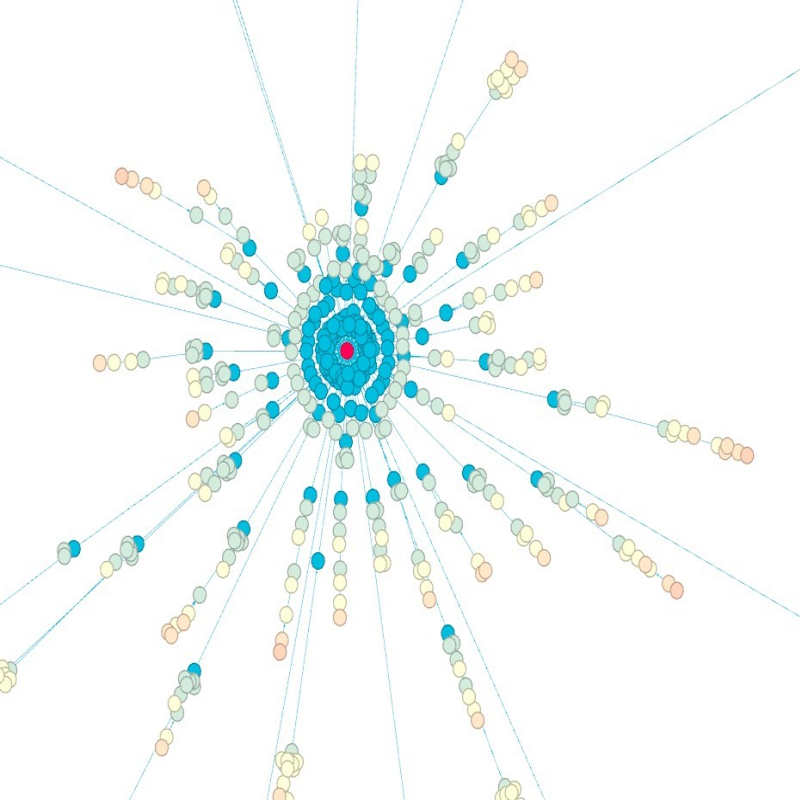

The center of the graph is the Ijtima’, when Case136 over three days infected (predicted by the model) around 300 people directly. This is shown below, where the “red dot” is Case136, and the immediate infections (over 3 days) are represented by “blue dots” (in Figure 2)

How does the disease spread out? How many generations it may generate over a period of 30 days (4 weeks)? And eventually how many people will get infected from the original case (by Case136) eventually over the period (4 weeks)? From the model the answer is as follows:

- There exist many smaller “spreader events” exhibited by the infected people (smaller hubs shown in Figure 1).

- There are about 4 to 5 “generations” created in the process, and the largest generation could be 6.5 (the distance as explained before). This is the number of “hops” in Figure 1.

- The number of people infected from this single source could be as large as 3,000 people (the total number of nodes in Figure 1).

- The number of hospitalization (i.e. confirmed cases) will take a lag time of anywhere from 14 to 21 days (before symptoms, if not confirmed by testing).

- Since as of today, confirmed cases traced to the Tabligh cohorts are about 1,200 to 1,300 - we could expect the numbers related to this group would still increase (i.e. coming in) over the next 14 days by in the range of 200 to 500 cases. This is assuming that 4th generations and 5th generations happened (during the last 30 days). (Nodes which are at the “edges” of the graph in Figure 1)

Predictions

What can we predict from the above model (assuming that the parameters chosen reflect some of the realities observed)?

- The cases which originate from the Tabligh cohorts is NOT yet over (i.e. not all cases had been captured), some new cases may appear over the next few days (or even next two weeks).

- Based on the numbers observed (from reports of cases), it is clear that many of the Tabligh individuals are “prominent actors” as far as contacts with other persons are concerned. Therefore, trying to trace all the people that they had been in contact with is quite precarious and requires aggressive testing strategies (on people they may have got in contact with).

- Tracing the higher generations which emanates from this cohort is extremely critical and important, since by now (and if time is extended further), the numbers of people potentially to be infected is already above 3,000 people from this origination alone.

- If tracing of all potentially infected (from this origin) failed, opening MCO after 14th April is just not possible - with the risk of further spread (despite being small in the number of people) is still high.

Conclude

We have performed the simulation based on purely “generic numbers” without any details of patients’ data and relations between patients. In the case of South Korea, we manage to get such granular data provided to Data Scientists to model the spread and was used for modeling the network and hence allow tracing by their government. We hope that in the future (or as the problem of Covid continues to persist - which might be the case), a more open approach to data-sharing is implemented to allow greater participation by Data Scientists to provide valuable and timely insights to decision-makers.

The problem that we have is not so much of “Big Data” and high-tech as claimed by some quarters - rather, we need modeling and smart modeling. Much can be achieved even with low tech solutions, provided that we use science of data (named Data Science) as our tools.

It is interesting to note that Kaggle as an example, started few competitions among data scientists to use data to help humanity understand (to better predict) Covid’s trajectories, and so far none that have come close to any reliable level of predictions.10

JP Morgan, Asia Pacific equity research, 23 March 2020; https://www.msn.com/en-my/news/national/warning-malaysias-covid-19-infection-rate-will-peak-mid-april-says-analysts/ar-BB11FyDa?li=BBr8Hnu↩

https://www.thestar.com.my/news/nation/2020/03/27/medical-experts-treat-forecast-with-caution↩

https://www.malaymail.com/news/malaysia/2020/04/02/selangor-task-force-for-covid-19-turns-to-tech-to-contain-outbreak/1852835↩

https://www.geekwire.com/2020/univ-washington-epidemiologists-predict-80000-covid-19-deaths-u-s-july/↩

https://www.imperial.ac.uk/media/imperial-college/medicine/sph/ide/gida-fellowships/Imperial-College-COVID19-NPI-modelling-16-03-2020.pdf↩

Pionti, et. al.,Charting the Next Pandemic: Modeling Infectious Disease Spreading in the Data Science Age, Springer, 2019↩

Reference: Chapter 10 “Spreading phenomena”, from Albert-Laszlo Barabasi,Network Science, Cambridge University Press, 2016↩

In network analytics, this is the measure of “betweenness”↩